New tutorials/workshops

The new tutorials and workshops after 2020 will be managed at http://ming3d.com/videotutorial/

The new tutorials and workshops after 2020 will be managed at http://ming3d.com/videotutorial/

Workshop: Eye tracking with Tobii Pro Glasses,

Location: CGC 4425E, DAAP Building

Time: 11:00am. 09.25.2019.

offered by Ming Tang, RA, LEED AP, DAAP, UC.

Sponsored by the 2018 Provost Group / Interdisciplinary Award

Web Access ( UC Login needed): Tobii Pro Eyetracker workshop source files

online resources

Content covered:

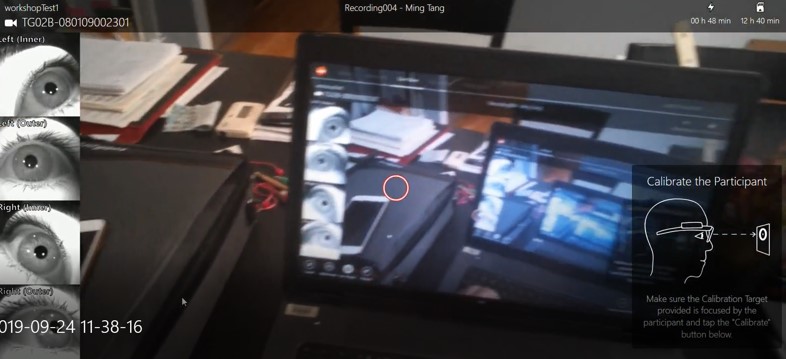

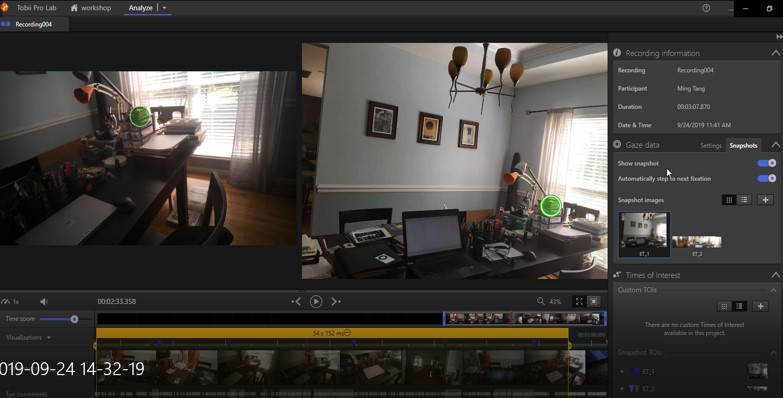

Connect WLAN. Password “TobiiGlasses”, Run Tobii Pro Glasses Controller. Basic UI and workflow of the following software:

[videojs mp4=”http://ming3d.com/videotutorial/videonew/Tutorial1_capture.mp4″ poster=”http://ming3d.com/videotutorial/videonew/Tutorial1_capture.JPG” width=”400″ height=”225″ preload=”none”]

[videojs mp4=”http://ming3d.com/videotutorial/videonew/Tutorial2_analysis.mp4″ poster=”http://ming3d.com/videotutorial/videonew/Tutorial2_analysis.JPG” width=”400″ height=”225″ preload=”none”]

Reference

Tang, M. and Auffrey, C. “Advanced Digital Tools for Updating Overcrowded Rail Stations: Using Eye Tracking, Virtual Reality, and Crowd Simulation to Support Design Decision-Making.” Urban Rail Transit, December 19, 2018.

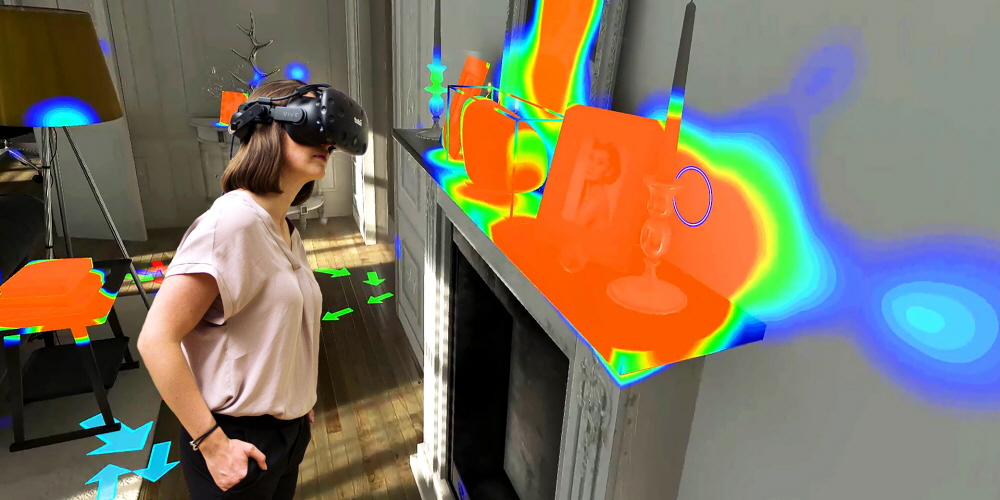

Eye-tracking devices measure eye position and eye movement, allowing documentation of how environment elements draw the attention of viewers. In recent years, there have been a number of significant breakthroughs in eye-tracking technology, with a focus on new hardware and software applications. Wearable eye-tracking glasses together with advances in video capture and virtual reality (VR) provide advanced tracking capability at greatly reduced prices. Given these advances, eye trackers are becoming important research tools in the fields of visual systems, design, psychology, wayfinding, cognitive science and marketing, among others. Currently, eye-tracking technologies are not readily available on at UC, perhaps because of a lack of familiarity with this new technology and how can be used for teaching and research, or the perception of a steep learning curve for its application.

It has become clear to our UC faculty team that research and teaching will significantly benefit from utilizing these cutting-edge tools. It is also clear that a collective approach to acquiring the eye-tracking hardware and software, and training faculty on its application will ultimately result in greater faculty collaboration with its consequent benefits of interdisciplinary research and teaching.

The primary goals of the proposed project are to provide new tools for research and teaching that benefit from cutting-edge eye-tracking technologies involving interdisciplinary groups of UC faculty and students. The project will enhance the knowledge base among faculty and allow new perspectives on how to integrate eye-tracking technology. It will promote interdisciplinarity the broader UC communities.

Hardware: Tobii Pro Glasses, Tobii Pro VR

Software: Tobii Pro Lab, Tobii VR analysis

![]()

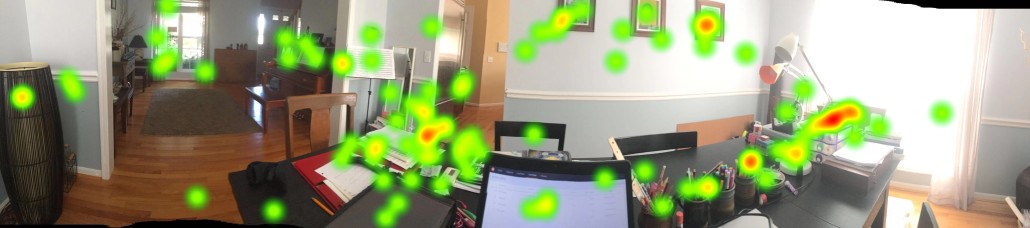

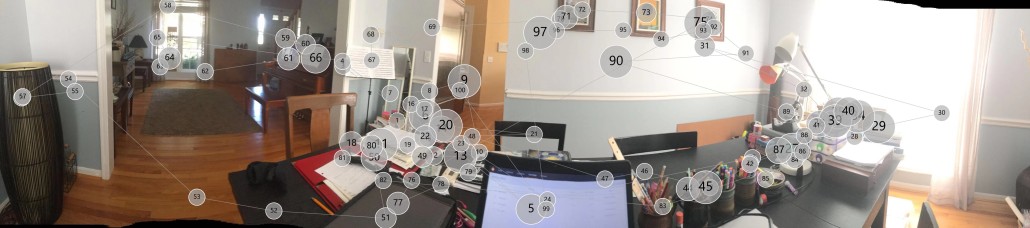

Test with Tobii Pro Glasses

Augmented Reality for Digital Fabrication. Projects from SAID, DAAP, UC. Fall 2018.

Hololens. Fologram, Grasshopper.

Faculty: Ming Tang, RA, Associate Prof. University of Cincinnati

Students: Alexandra Cole, Morgan Heald, Andrew Pederson,Lauren Venesy,Daniel Anderi, Collin Cooper, Nicholas Dorsey, ,John Garrison, Gabriel Juriga, Isaac Keller, Tyler Kennedy, Nikki Klein, Brandon Kroger, Kelsey Kryspin, Laura Lenarduzzi, Shelby Leshnak, Lauren Meister,De’Sean Morris, Robert Peebles, Yiying Qiu, Jordan Sauer, Jens Slagter, Chad Summe, David Torres, Samuel Williamson, Dongrui Zhu, Todd Funkhouser.

Project team lead: Jordan Sauer, Yiying Qiu, Robert Peebles,David Torres.

Videos of working in progress

Nov 2nd at 1:10 PM until 3:00 PM

Room 6221, DAAP, University of Cincinnati

With the recent development of head-mounted display (HMD), both Virtual Reality (VR) and Augmented Reality (AR) are being reintroduced as Mixed Reality (MR) instruments into the design industry. It is never so easy for us to design, visualize, and interact with the immersive virtual world. This session will investigate the workflow to visualize a design concept trough AR and VR. We will use virtual DAAP project as a vehicle to explore 3D simulation with mixed reality. Using the DAAP building at the University of Cincinnati as a wayfinding case study, the multi-phase approach starts with defining the immersive system, which is used for capturing participants’ movement within a digital environment to form raw data in the cloud, and then visualize it with heat-map and path network. Combined with graphs, survey data is also used to compare various agents’ wayfinding behavioral related to gender, spatial recognition level, and spatial features such as light, sound, and architectural elements. The project also compares mixed reality technique with the space syntax and multi-agent system as wayfinding modeling methods.

This session includes both a seminar and project demonstration format and develops techniques for VR and AR technology as they influence the process of visualizing and forming human and computer interactions. The session will discuss the connections among different immersive techniques for real-time visualization as a critical methodology in the design process. The session will also examine the current technical, physiological and cognitive constraints relate to the immersion and interaction in virtual reality and augmented reality.

We just had another workshop in DAAP, presented by Michale Rogovin. The workshop is sponsored by dFORM student organization at UC.

link: http://2015.acadia.org/workshops.html

Shane Burger, Director of Design Technology at Woods Bagot

Ana Garcia Puyol, Computational Designer at CORE Studio Thornton Tomasetti

3 DAY WORKSHOP

Location: TUC great Hall.

DATES: 19-21st OCT

UC Faculty Participation

Prof. Benjamin Britton, Prof. Jim Postell, Prof. Julia Wang Prof. Ming Tang

Graduate student

Rogovin, Michael. Craig Moyer

A group of UC faculty and graduate students participated the Virtual Reality workshop during 2015 ACADIA conference hosted at University of Cincinnati. Check out the pictures here.

3 DAY WORKSHOP

DATES: 19-21st OCT

CAPACITY: 12 SEATS

www.woodsbagot.com

core.thorntontomasetti.com

The field of Interaction Design (IxD) has come to dominate our experience of the digital world. As our physical world increasingly takes on digital threads in our everyday experiences, the role of the architect needs to actively engage IxD for the built environment. We need to move from prototyping form to prototyping experience.

This workshop will engage that new role by providing a simulation space for an architecture of dynamic experiences. Through the use of a realtime visualization engine, workshop participants will design interactive environments to simulate active engagements within dynamic spaces. Dynamics could range from functional to comfort to more playful and artistic virtual installations that will allow for creative collaboration between participants.

Workshop participants will learn the basics of interactivity in Grasshopper, and how to connect their responsive designsto a custom-made WebGL environment. This will then be experienced within Virtual Reality environments using our mobile phones in combination with inexpensive VR hardware such as the Google Cardboard. Our prototype space will use an existing model of the DAAP Aronoff Center, our hosts for the ACADIA Event.

Prerequisite Knowledge: Beginner Rhino & Grasshopper knowledge.

Hardware Requirements: Bring your own laptop as well as a cellphone and cellphone charger.

Software Requirements: Rhino 5, Grasshopper 0.9.0076, and Google Chrome installed on both the laptop and phone.